MIDI and digital audio are different ways of recording information about sound. MIDI is a set of instructions about how to generate a sound (using a MIDI device), whereas digital audio is a representation of an actual sound wave. They are both useful ways of producing and arranging music, and each has its own advantages and disadvantages.

In this article we’ll look at:

- Is MIDI the same as digital audio?

- The differences between MIDI data and digital audio data

- What is MIDI?

- What is digital audio?

- The advantages and disadvantages of MIDI data over digital audio data

- Conclusion

Is MIDI the same as digital audio?

In a word—no.

MIDI is not the same as digital audio.

MIDI data is like a set of instructions, while digital audio data is a digital representation of sound waves.

The differences between MIDI data and digital audio data

Consider hitting the note C on a keyboard. Let’s assume that the keyboard generates audio signals and also generates MIDI data (some keyboards only do one, or the other, but we’ll assume our keyboard does both).

We’ll also assume that the keyboard is connected to a MIDI-enabled audio interface via both a line-in port and a MIDI port.

When you hit note C, a sound is generated that corresponds to (ie. it’s the same frequency as) the note C.

Two things will then happen:

- The keyboard will send an audio signal (an electrical representation of sound) to the audio interface—this will then be converted from an analog form to a digital form through a process called analog-to-digital conversion (ADC)

- The keyboard will also send MIDI information to the audio interface, and in turn the audio interface will send the information to a virtual instrument in a computer-based digital-audio-workstation (DAW) or other MIDI-enabled digital device

How are these two things different?

The audio signal is an actual representation of the note C (ie. a representation of an actual sound), whereas the MIDI information is merely a description of what you did on the keyboard to produce the note C—a set of instructions on how to do it.

The table below helps to explain this:

| Action | Audio Information | MIDI Information |

|---|---|---|

| Hit the note C | An electrical signal which represents the note C is generated by the keyboard, ie. a signal with a frequency that corresponds to the sound of the note C The electrical signal is converted from an analog form to a digital form in a connected audio interface, so that it can be processed by a computer | A set of instructions is generated by the keyboard on how the note C was played For example: 1. Start playing note (“Note On”) 2. Which note to play (“Key”) 3. Velocity of note 4. Pressure of note 5. Stop playing note (“Note Off”) This information is already in a digital form and can be processed by a computer |

So, MIDI and digital audio are fundamentally different ways of capturing a musical sound.

This is reflected, for instance, in the way that MIDI and audio data are recorded differently in musical compositions (ie. MIDI tracks vs audio tracks) by modern DAWs.

MIDI and digital audio data are both, however, digital forms of data and can be processed by computer systems.

But, MIDI data takes up far less computer memory than typical digital audio data, since it’s only a set of instructions about how to produce a sound rather than an actual representation of the sound.

The table below summarizes the differences between MIDI and digital audio:

| Attribute | MIDI Data | Digital Audio Data |

|---|---|---|

| Is it a form of digital information? | Yes | Yes |

| Can it be edited and processed by a computer system? | Yes | Yes |

| Is it a representation of an actual sound wave? | No—it is a set of instructions about how a sound was generated using a MIDI-enabled device (or software) | Yes—it is a digital representation of an analog sound wave |

| How is it generated? | By using MIDI-enabled devices, eg. MIDI keyboards, controllers, or sequencers | By converting analog audio (electrical) signals of sound into a digital form through analog-to-digital conversion (ADC) |

| How is it heard by humans? | By using a MIDI-enabled device that follows the instructions embedded in MIDI data to play a sound | By converting the digital audio data to an analog form (through digital-to-analog conversion, or DAC) and playing this through an amplified speaker system or headphones |

| Does it use a lot of computer memory? | No—since it only contains instructions, it doesn’t take up much memory | Yes—since it’s a representation of actual sound waves, it takes up much more memory than MIDI data |

| Does it need any special equipment? | Yes—MIDI-enabled devices, MIDI software, and MIDI cables | Yes—ADC and DAC-enabled devices |

Let’s now take a closer look at MIDI and digital audio to better understand what they are and how they differ.

What is MIDI?

MIDI is an acronym for Musical Instrument Digital Interface. It evolved as a communication standard—the MIDI protocol—for synthesizers in the 1980s. It was developed by a group of major synth manufacturers at the time, including Korg, Roland, and Yamaha.

MIDI allows synthesizers (or other MIDI-enabled devices) to communicate with each other.

Using MIDI, you can control multiple synthesizers from just one synthesizer—this was popular in the 1980s, for instance, with the layered synth sounds that featured in 80s-era pop music.

MIDI also lets you sequence music. This means that you can string together a series of notes and chords—just the instructions, of course, not the actual sounds—to form a song or piece of music.

Besides synthesizers, examples of other MIDI-enabled devices are (physical) electronic drum machines and sound modules, virtual (software) instruments, and computer-based DAWs.

MIDI messages

The specific set of instructions that are sent through the MIDI protocol is called a MIDI message.

MIDI messages consist of bytes of information, usually two to three bytes long. Each MIDI byte is 8 bits long.

Using MIDI messages, you can use a MIDI keyboard—or any other type of MIDI controller—to trigger sounds on other MIDI devices. The triggered devices may generate their own sounds or send the MIDI information on to other MIDI devices.

A series of MIDI messages is often referred to as a MIDI file, and the particular format used for specifying MIDI messages is sometimes called the MIDI file format.

There are several classifications for MIDI messages, and some of these are shown in the table below:

| MIDI Message Type | MIDI Message Sub-Type | Description |

|---|---|---|

| Channel messages | Voice messages | To turn notes on and off, assign particular sounds, and alter the active sound |

| Mode messages | Information on how a MIDI device should process MIDI voice messages | |

| System messages | Common messages | General system data |

| Real-time messages | Synchronization data | |

| Exclusive messages | Non-standard data |

MIDI ports and cables

MIDI messages are transmitted between devices through MIDI cables, and these cables connect to MIDI ports on each device.

In early MIDI equipment, the only way to connect MIDI devices was through DIN 41524 connectors. These connectors have 5-pins and only allow data to travel in one direction. This means that you need a separate cable for each direction of transmission between devices.

MIDI devices with DIN connections, such as MIDI-enabled synthesizers or audio interfaces, typically have a MIDI IN port and a MIDI OUT port. Some devices also have a MIDI THRU port.

To send MIDI information from one device to another—from a synthesizer to an audio interface, for instance—you should connect the MIDI OUT port of the sending device (eg. the synthesizer) to the MIDI IN port on the receiving device (eg. the audio interface).

You would also need to do the reverse connection if you want to send and receive MIDI information on the same device.

So, you would need both ports on both devices connected to each other, ie. connect the MIDI OUT of your synth to the MIDI IN of your audio interface, and the MIDI OUT of your interface to the MIDI IN on your synth.

The MIDI THRU port is also available on some devices. This passes on exactly the MIDI information being received on the MIDI IN port. This can be handy, for instance, if you want to daisy-chain several MIDI devices together, allowing them all to be controlled by a single MIDI device.

MIDI ports are connected together by using 5-pin MIDI cables.

In modern MIDI equipment, USB connections are often also used for sending and receiving MIDI data rather than DIN connectors.

With USB-enabled MIDI devices, you can connect directly to a computer or other USB-enabled MIDI device, and send and receive MIDI information just like with any other USB connection.

Since USB is bi-directional, you don’t need a separate connection for each direction of travel (as you do for MIDI cables)—a single USB connection can send and receive MIDI data from a device (along with other information).

Advances in MIDI (MIDI 2.0)

The MIDI protocol is continuing to improve.

Some of the limitations discussed above, such as the one-directional travel of MIDI messages when using MIDI cables, no longer apply when using an upgraded version of the MIDI protocol called MIDI 2.0.

The versatility and applications of MIDI are also increasing under MIDI 2.0.

The following video offers a great introduction to MIDI 2.0 and its range of capabilities—it’s a great improvement on the old (MIDI 1.0) protocol that’s been around since the 1980s!

What is digital audio?

Digital audio is a digital representation of actual (analog) sound waves.

So, unlike MIDI data, which is only a description of how to produce a sound using a MIDI-enabled device (ie. a set of instructions), digital audio is a representation of actual sound.

Digital audio is generated as follows:

- When a sound is made—if you strike the note C on a piano, for instance—vibrations are generated in air (from the piano strings)

- These vibrations capture the frequency and amplitude of the note C, and they travel through air and can be heard by human ears (and interpreted by human brains)

- If you place a microphone near the piano, then the microphone picks up the vibrations and converts them to an electrical signal

- This signal represents, or emulates, the frequency and amplitude of the sound wave of the note C

The electrical audio signals produced by the microphone in this example are analog signals—they are continuous signals that vary over time in a natural, real-time manner.

Analog signal amplitudes can take on any value in a range of values (ie. from, a continuum of possible values), varying over time.

Analog-to-digital conversion (ADC)

In most modern digital audio setups, microphones connect to audio interfaces, and these, in turn, connect to a computer that runs DAW software.

When an analog audio signal passes from a microphone to the audio interface, it is converted to a digital audio signal through a process called analog-to-digital conversion (ADC).

ADC works as follows:

- A series of periodic “snapshots”, or samples, are taken of an analog signal

- At each sample, a measurement of the amplitude of the analog signal is taken

- Each measurement is then recorded in a digital form (using a binary number system)

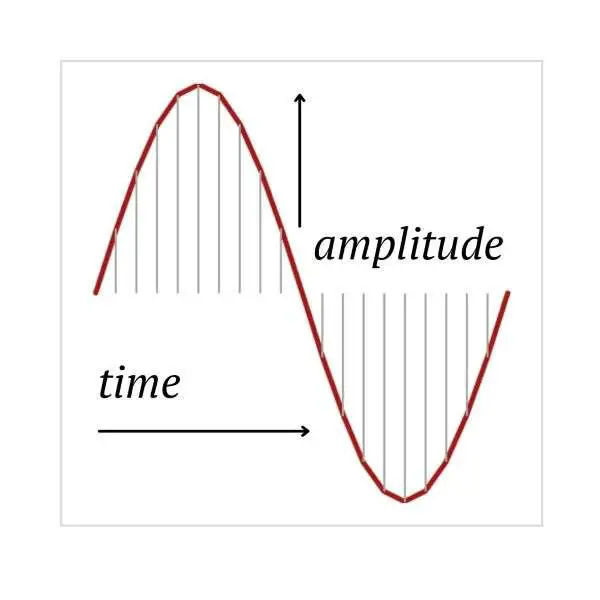

The picture below shows how a continuous analog signal can have periodic samples taken (indicated by the vertical grey lines) to record the amplitude of the wave at points in time.

After the ADC process, the audio signal is recorded as a series of (binary) numbers, representing the periodic amplitude measurements of the original analog signal. Since these numbers are binary (which is what modern computer systems are based on), each measurement contains a series of bits (0s and 1s).

Digital audio data and DAC

After being converted to a digital form, an audio signal can be processed using all of the power of modern computing. It can be passed into specialized software (DAWs) for editing, mixing, mastering, or even for digital effects (eg. echo, delay, or reverb) using digital signal processing techniques.

So, digital audio data is just a series of binary numbers that are representations of sound waves.

Once the digital audio data has been processed in whatever way you like, you would need to convert it back into a form that you can hear—this process is called digital-to-analog conversion or DAC.

DAC is a reversal of the ADC process, converting digital audio data back to analog (electrical) audio signals.

Some loss of fidelity can occur when this is done, depending on the parameters used, but modern digital audio systems are very good at retaining a very high amount of the original (analog) information.

A re-converted analog audio signal would then need to be converted back to vibrations in air so that you can hear it—this is done through amplifiers and speaker systems, or through headphones.

The advantages and disadvantages of MIDI data over digital audio data

We’ve seen that MIDI differs from digital audio in a number of ways—but what are the advantages of using MIDI over digital audio?

Advantages of MIDI

There are three key advantages that MIDI data has over digital audio:

1. MIDI files are much smaller than digital audio files

Digital audio can be 30 times or more (sometimes much more) larger than MIDI files.

This makes the storage and transmission of digital audio much more challenging than MIDI.

If you’re using a small device, or a low bandwidth and are working “on the road”, for instance, then smaller file sizes are a big advantage.

2. MIDI files are easy to edit

Since they contain instructions, and not actual (representations of) sound waves, it’s easy to change the sequence or type of instructions in a MIDI file. This is lot harder to do with digital audio.

Consider the example of changing a chord. If you wanted to change a C-Major triad (C-E-G) to a C-Minor triad (C-Eflat-G), for instance, then this is as easy as changing the data for “play the E note” to “play the E-flat note” when using MIDI.

With digital audio, you would need to alter the very sound that the data represents (ie. change the “sound” from a C-Major sound to a C-Minor sound), and there’s no easy way of doing this other than by re-recording the chord.

3. MIDI files are ‘portable’ across instruments

Since MIDI files contain only instructions, the actual instruments that you choose to play the instructions with (ie. play the notes that the instructions describe) can be selected and changed at will (provided, of course, that the instruments are MIDI-enabled).

With digital audio, once an instrument is recorded it can’t be changed.

Disadvantages of MIDI

There are also a couple of important disadvantages to using MIDI over digital audio that are worth noting:

1. MIDI data only works with MIDI instruments

You can’t use MIDI data to capture voice or natural sounds, it can only be used to send instructions to MIDI-enabled devices that produce their own sound.

And while there are many virtual (software) instruments available, and also physical (electronic) MIDI instruments such as keyboards or drum machines, there aren’t many MIDI-enabled guitars or other types of instruments.

You are limited with what you can play if you only use MIDI data.

With digital audio, just about any sound or any instrument can be captured, either directly (through line-in connections) or through microphones connected to a suitable audio interface.

2. MIDI is focused on music production

MIDI was developed by the music equipment industry and was first deployed (at scale) for use in synthesizers. MIDI has, to date, had limited use outside of core music production.

Digital audio, on the other hand, can be used in a wide array of audio applications other than in music production. For purely voice productions, such as in podcasting, or where natural sounds need to be captured, digital audio is versatile and effective.

MIDI 2.0, however, does offer a wider range of applications than the old MIDI protocol (MIDI 1.0), so the applications of MIDI in the future may extend far beyond where it has in the past.

Either way, it’s interesting to note that using MIDI actually depends on digital audio—when a MIDI file is sent to a MIDI device, such as a synthesizer, the sounds generated by the MIDI device are often from sampled, digital audio files. The MIDI file merely instructs—triggers—the digital audio file to play.

Conclusion

MIDI data and digital audio data are different ways of capturing information about sound.

MIDI data is a set of instructions about how a sound is produced, which can then be used to reproduce the sound using a MIDI device.

Digital audio data is a representation of actual (analog) sound waves, captured in a digital form. To playback a digital audio file, the digital data needs to be converted back to an analog form and played through speaker systems or headphones.

There are three key advantages of using MIDI data over digital audio data:

- MIDI files are small compared with digital audio files—this makes it much easier to store and transfer MIDI data

- MIDI files are easy to edit—they can be changed by simply changing the types or sequence of instructions, which is much easier than editing actual sound waves captured by digital audio

- MIDI files are portable across instruments—you can use any MIDI-enabled device to play the instructions contained in a MIDI file, so it’s easy to swap and change as you like, unlike digital audio where once an instrument is recorded, it’s committed

There are also a couple of disadvantages of using MIDI data that are worth noting:

- MIDI works only with MIDI instruments—you can only trigger sounds on MIDI instruments when using MIDI data, and there’s a limited range of instruments to play (generate) MIDI information with

- MIDI is focused on music production—while MIDI offers a range of capabilities when producing or arranging music, it has had a limited use with other types of audio production, such as in voice-specific applications like podcasting—this may be changing with advances in the MIDI protocol, however, under MIDI 2.0

Whatever your purpose, if you’re working with digital music then there are situations where either MIDI or digital audio would work best for you.

Fortunately, it’s easy to have both options available to you with the technology available today. This is especially the case if you have a MIDI-enabled audio interface that will allow you to make the most of digital audio and MIDI depending on your needs.